Mojo is a new programming language built for AI and high-performance computing. It looks familiar at first glance. Python-like syntax, simple structure, easy readability, but what happens underneath is completely different.

The language is designed to run across CPUs, GPUs, and modern accelerators, using the same codebase, without forcing developers into separate systems for performance and development. That alone makes it stand out in a space where most tools are built around trade-offs.

The Mojo programming language is currently being positioned as a way to bring systems-level performance into the same environment where AI models are written and tested.

See what it has to offer, including its performance, key features, working with AI, and whether it could be the next big programming language for AI!

What Is Mojo Programming Language?

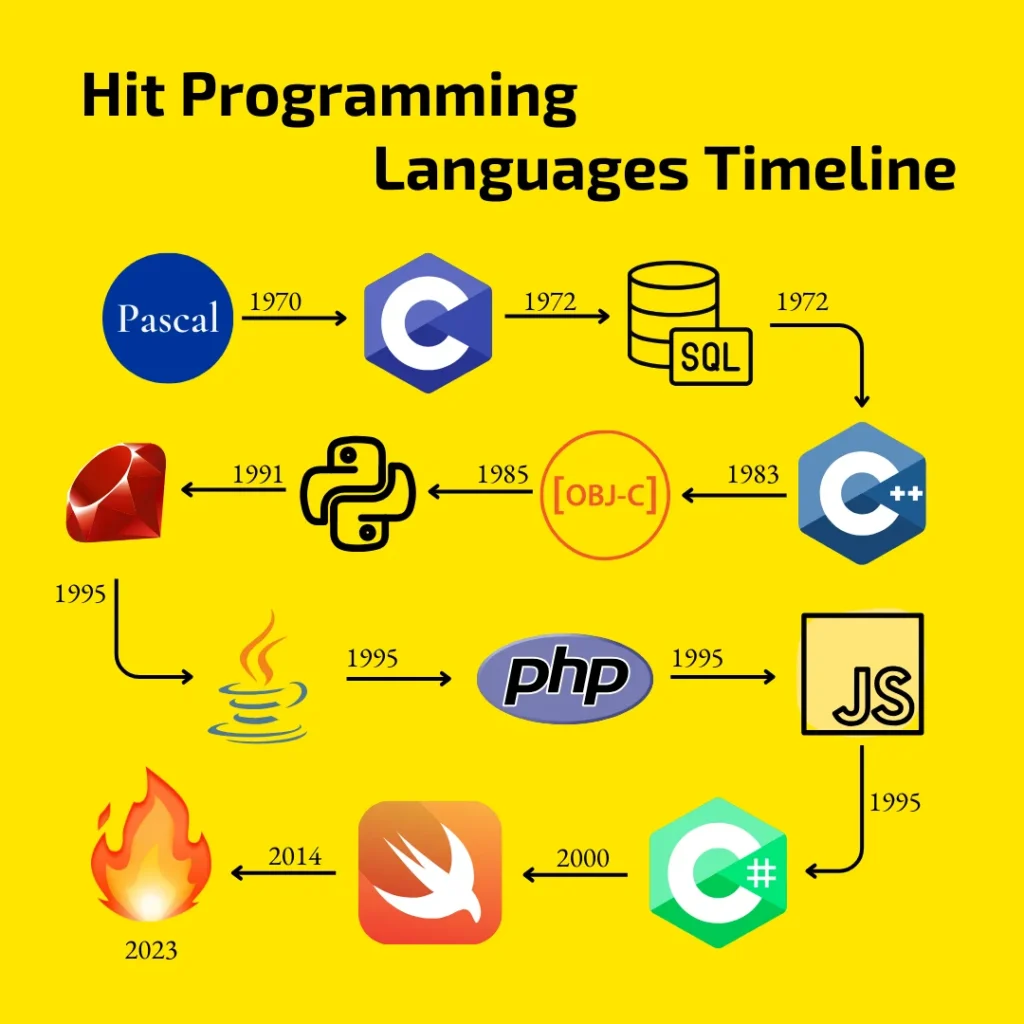

Mojo is a modern programming language developed by Modular Inc. and publicly introduced in 2023. It is designed as a superset of Python, meaning it keeps Python-like syntax while introducing systems-level performance capabilities.

Unlike traditional languages that specialize in either ease of use or performance, Mojo attempts to combine both:

- Python-like readability for fast development

- C/C++-level performance for compute-heavy workloads

- Native support for modern AI hardware like GPUs and accelerators

Mojo is often described as “Python with C++ performance,” but that description misses the deeper intent.

At its core, Mojo is an attempt to unify:

- high-level programming

- systems-level control

- and hardware execution

into a single language model.

It keeps Python-like syntax so developers don’t lose productivity, but underneath, it introduces a compiler and execution system designed for modern hardware acceleration from the ground up.

Unlike traditional languages that separate “easy coding” from “fast coding,” Mojo treats both as part of the same system. That design choice is what makes it fundamentally different

Why is it Called Mojo?

The name “Mojo” means magic and charm, which fits well for a language that boosts Python’s power. Mojo is about adding new abilities to Python, making it more capable and efficient. Mojo was created to work well with accelerators and systems used in areas like artificial intelligence. These systems often need more than what regular programming can do. By choosing a name that suggests magic, the creators wanted to show that Mojo can make Python perform in amazing, almost magical ways.

What is Magic?

Magic, in the context of Mojo, is a virtual environment and package manager that supports a variety of programming languages including Python and Mojo. It is based on the conda/PyPI ecosystems and provides access to thousands of packages for Python, as well as other languages. Magic includes support for MAX, Mojo, and other environments. This gives developers more flexibility and options when working with these environments.

Why Mojo Was Created: The Real Engineering Bottleneck in AI Systems

Modern AI systems are built as stacks of separate execution layers. Each layer solves a specific part of the workflow, but together they introduce complexity that grows faster than the models themselves.

Hardware execution is fragmented

AI workloads depend on different hardware ecosystems, each with its own programming model and optimization rules.

NVIDIA systems rely on CUDA, AMD systems rely on ROCm, and Intel systems use oneAPI. Code optimized for one environment does not transfer cleanly to another. Performance tuning, memory management, and kernel design often need to be rewritten for each target platform.

This leads to duplicated implementation work across hardware targets.

AI workflows span multiple execution environments

A typical AI system is divided across separate layers of development:

- model research and experimentation in Python

- performance-critical components in C++ or CUDA

- training and inference logic handled through frameworks like PyTorch or TensorFlow

Each layer introduces its own way of expressing the same core operations such as tensor computation, data processing, and optimization logic. As systems scale, the same functionality exists in multiple forms across the stack.

Python introduces execution constraints at scale

Python supports rapid development and experimentation, but large-scale workloads expose structural limits in its execution model:

- parallel execution is constrained by runtime design

- direct interaction with hardware is not exposed at the language level

- performance relies on external compiled extensions

This creates a separation between application logic and performance optimization, where critical computation is moved outside the main codebase.

AI toolchains are distributed across specialized systems

Production AI pipelines are built using multiple independent tools:

- Training frameworks manage model development

- Inference engines handle optimized execution

- Serving systems manage deployment and scaling

Each tool operates under different assumptions about performance, memory usage, and execution flow. Integration across these systems becomes part of the engineering burden.

What this leads to

Across large AI systems, the main difficulty shifts from building models to managing consistency across execution layers, hardware targets, and toolchains.

Mojo is designed around this reality by providing a single programming environment that can express high-level logic and low-level execution behavior within the same system.

Features of the Mojo Programming Language: Why Mojo is a Game-Changer for AI Developers?

Hardware Is Not an Afterthought Anymore

The most important shift Mojo introduces is architectural (not syntactic!). Instead of treating programming as layers of abstraction stacked on top of each other, Mojo attempts to make them part of one continuum:

- high-level logic

- low-level optimization

- hardware execution

are no longer separate concerns.

This means performance tuning is not something you “drop into later”, it is part of the same language you write from the beginning. That alone changes how AI systems are designed. Most programming languages treat hardware as something you “target” indirectly through libraries or drivers.

Mojo changes that relationship. It is designed to treat modern accelerators especially GPUs not as external systems, but as native execution targets. This matters because AI workloads today are not CPU-bound anymore. They are compute-distributed across GPUs and specialized accelerators. Mojo’s design reflects that reality by making parallel execution and hardware awareness part of the language itself, rather than an external optimization step.

Python Compatibility: Not a Feature, but a Strategy

One of Mojo’s most important design decisions is its compatibility with Python. But this is often misunderstood. This is not about convenience. It is about adoption survival.

Instead of forcing developers to abandon existing Python ecosystems, Mojo allows gradual integration. Developers can:

- reuse Python libraries

- migrate performance-critical components

- incrementally optimize systems without rewrites

This makes Mojo less of a replacement and more of an evolutionary layer on top of existing systems.

Unlocking Python’s Performance Limitations

Python has been a go-to language for AI development, but it struggles with performance when handling heavy computations or processing vast amounts of data. Mojo tackles this issue by offering the same ease of use and familiar syntax as Python but boosting it with the speed of languages like C++ and CUDA. This means developers can enjoy Python’s simplicity without compromising on performance, especially in critical AI tasks where speed is essential.

One Language for All Tasks

One of Mojo’s key advantages is its versatility. Instead of juggling multiple languages—using Python for AI development, C++ for performance-heavy tasks, and CUDA for hardware acceleration—Mojo allows developers to handle all of this in one language. This unified approach simplifies development, reduces complexity, and makes it easier to maintain code, as developers no longer need to switch between different programming environments for different parts of their projects.

Integration with AI Frameworks

Mojo’s seamless compatibility with popular AI frameworks like TensorFlow, PyTorch, and Scikit-learn further strengthens its appeal. Developers can continue using these familiar tools to build, train, and deploy AI models while benefiting from Mojo’s enhanced performance. This integration ensures that AI developers don’t have to sacrifice the tools they trust.

Support for Accelerators (GPUs and ASICs)

One of Mojo’s standout features is its ability to support advanced hardware, such as GPUs and ASICs, which are often required for AI and machine learning applications. These accelerators help process large amounts of data quickly, and Mojo is designed to tap into this power, making it an ideal language for AI developers who need high performance for training models and running complex algorithms.

Just-In-Time and Ahead-of-Time Compilation

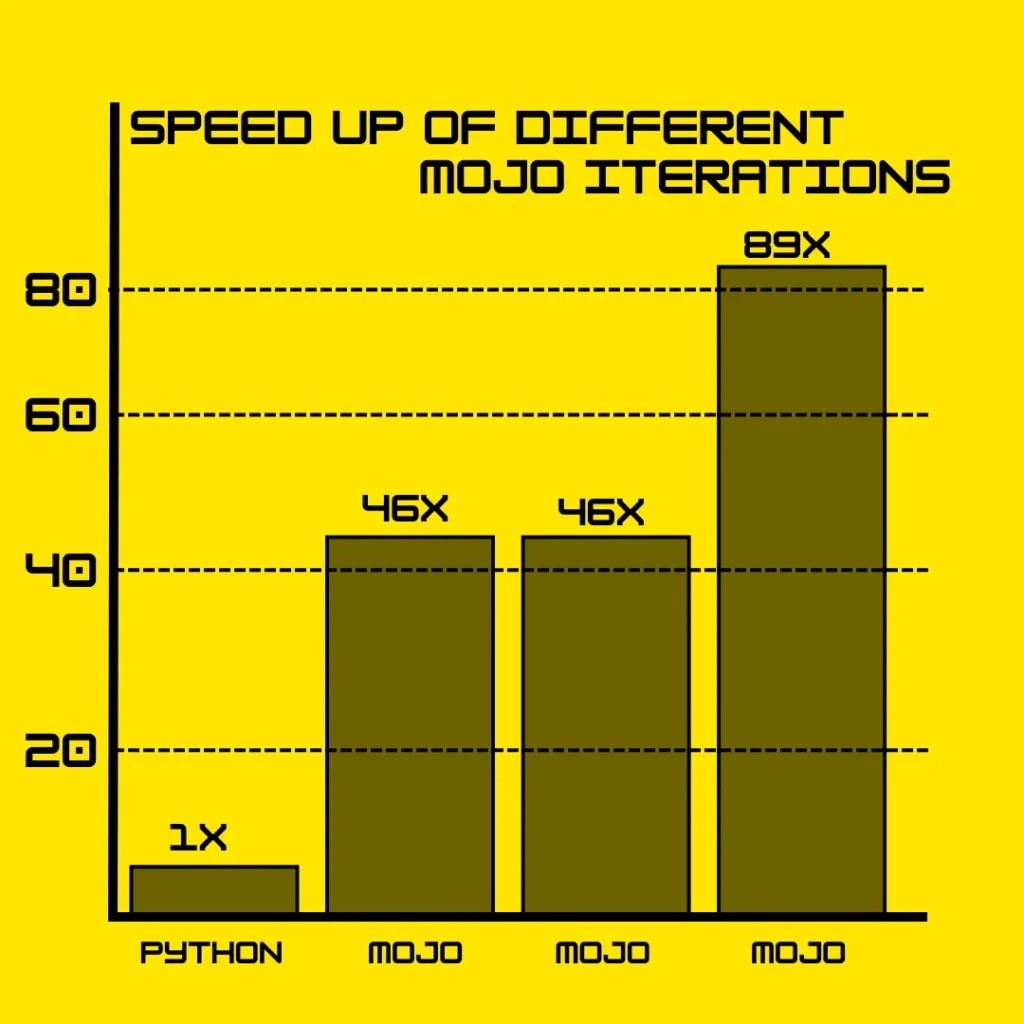

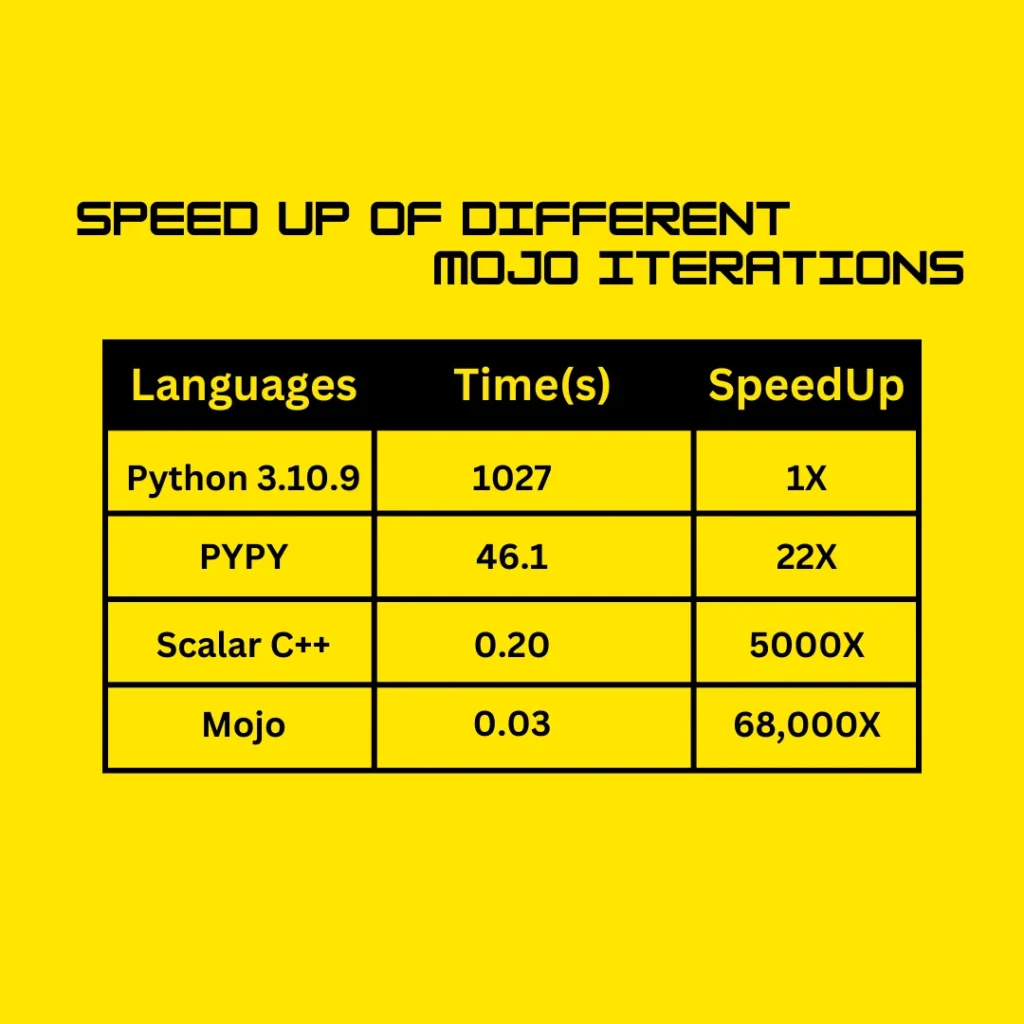

To further boost efficiency, Mojo uses a combination of Just-In-Time (JIT) and Ahead-of-Time (AOT) compilation techniques. JIT compilation allows code to be optimized while it’s running, making it faster. Meanwhile, AOT compilation pre-compiles code before execution, reducing runtime delays. These advanced compilation methods contribute to Mojo’s ability to perform up to 68,000 times faster than Python in some cases, offering developers the best of both worlds—dynamic flexibility and high-speed execution.

Ecosystem Reality: Strengths and Limitations at the Same Time

Mojo is still early in its lifecycle, and that reality matters. On one hand, it is actively evolving with a strong engineering direction. On the other hand, it does not yet have the ecosystem maturity of Python.

Key constraints today include:

- limited third-party libraries

- smaller community base

- No support for Windows

- proprietary governance model under Modular Inc.

At the same time, early-stage systems often evolve fastest precisely because they are not constrained by legacy decisions.

Mojo vs Other Programming Languages

Mojo vs Python

Python is popular for AI because of its simple syntax and extensive libraries, but it struggles with performance, especially for heavy computations. Mojo solves this by combining Python’s ease of use with optimizations that make it up to 68,000 times faster than Python. This makes Mojo an excellent alternative for developers who need Python’s simplicity with far better performance for demanding AI tasks.

Mojo vs C++

C++ is known for its high performance but can be difficult to learn and use due to its complex syntax. Mojo offers similar computational poc++wer with a much simpler, Python-like syntax. This makes Mojo a more approachable choice for developers needing C++-level performance without the steep learning curve.

Mojo vs Java

Java is flexible and scalable, but in AI, Mojo stands out. Mojo is designed for AI-specific tasks and integrates easily with hardware accelerators like GPUs and ASICs. While Java is reliable for general programming, Mojo’s focus on performance and ease of use makes it better suited for high-performance AI applications.

Applications of Mojo Programming Language in AI

Natural Language Processing (NLP)

Mojo is a great choice for natural language processing tasks like sentiment analysis, translation, and language generation. Its ability to leverage hardware accelerators, such as GPUs, makes it ideal for handling large datasets and performing complex computations quickly. Since NLP often involves processing vast amounts of text data, Mojo’s speed improvements over Python can lead to faster model training and more efficient inference, making it a great choice for text-based AI applications.

Computer Vision

In computer vision tasks, such as object detection, image classification, and facial recognition, performance is key. Mojo’s optimizations help handle the high computational demands of these tasks. With support for accelerators like GPUs and easy integration with AI libraries, developers can use Mojo to boost the performance of vision-based AI applications. This means faster processing of image data and more accurate real-time analysis, which is critical in fields like security, healthcare, and autonomous systems.

Reinforcement Learning

Reinforcement learning (RL) models, especially in complex simulations and training environments, require great computing power. Mojo’s high performance allows developers to run more iterations and simulations in less time, speeding up the training process. With its ability to handle demanding computations efficiently, Mojo is an excellent choice for RL applications, where the goal is to find optimal solutions through trial and error in dynamic environments.

IDEs and Tools for Mojo Development

Supported IDEs

Developers working with Mojo can use popular Integrated Development Environments (IDEs) like Visual Studio Code and PyCharm. These IDEs offer useful features like syntax highlighting, code completion, and debugging support, which make coding in Mojo more efficient. Since Mojo’s syntax is similar to Python, these tools make it easy for developers familiar with Python to transition smoothly into Mojo.

Mojo Playground

For those looking to quickly experiment with Mojo, there’s the Mojo Playground, a browser-based platform designed for rapid prototyping. It’s an ideal tool for trying out new ideas without needing to set up a full development environment. You can write and execute Mojo code directly in the browser, which makes it convenient for testing features or learning the language. The Playground simplifies the process of getting started with Mojo, making it accessible for beginners and experienced developers alike.

The Future of Mojo: Replacement or Layer?

The most common question is whether Mojo will replace Python. That framing is misleading. A more realistic future looks like this:

Python remains the dominant language for general AI development, while Mojo evolves as a performance layer beneath it.

In that model:

- Python defines intent

- Mojo handles execution efficiency

Whether Mojo expands beyond that depends less on syntax and more on ecosystem growth, tooling maturity, and industry adoption.

Getting Started with Mojo Programming Language

To start coding in Mojo, you need to install it in your system using our step-by-step guide on Mojo installation. The guide covers the installation for different Operating Systems including Windows, Linux, and Mac. Our guide is the most updated one, and each and every step is tested before we’ve written the blog.

Also, we have a Mojo tutorial series that covers different coding topics on Mojo with real world examples, easy code snippets and visuals.

Conclusion

Mojo has the potential to change the way AI development is done by combining the easy-to-learn style of Python with the speed of powerful languages like C++. Its ability to handle complex tasks at fast speeds makes it a great choice for AI, machine learning, and data-heavy projects.

Although it’s still new, Mojo could shape the future of programming, especially for performance-demanding tasks. As more tools and resources are developed, Mojo might become a key language in the field. Developers interested in future technologies should give Mojo a try.

FAQs

What is Mojo, and who created it?

Mojo is a relatively new programming language that was developed by Modular Inc and launched in 2023. It is designed to combine Python’s simplicity with the performance of languages like C++, making it ideal for AI, machine learning, and high-performance tasks.

How is Mojo different from Python?

While Mojo builds on Python’s easy-to-read syntax, it offers performance improvements up to 68,000 times faster in some cases. This makes Mojo more suitable for tasks that require heavy computations, such as AI model training and data processing.

Why is Mojo faster than Python?

Mojo uses advanced compilation techniques, like Just-In-Time (JIT) and Ahead-of-Time (AOT) compilation, which significantly boost execution speed compared to Python’s dynamic nature.

Is Mojo a replacement for Python?

Mojo has the potential to complement Python in performance-critical areas, but due to Python’s massive community, libraries, and ecosystem, it’s unlikely that Mojo will fully replace Python. However, Mojo is a great option for developers working on intensive AI projects.

What are the main applications of Mojo in AI?

Mojo is highly suited for Natural Language Processing (NLP), Computer Vision, and Reinforcement Learning due to its speed and ability to handle complex simulations and data-heavy AI tasks.

What challenges does Mojo face in becoming a mainstream language?

Mojo is proprietary, meaning its open-source support is limited, and its ecosystem is still growing. To become mainstream, it will need to build a larger community and more libraries to match Python’s extensive resources.